Management guru Peter Druker’s most important quote resonates completely with voicebot performance: “If you can’t measure it you can’t improve it.”

Aren’t CXOs constantly debating the expenditure on technology and its RoI? While a razor-sharp focus on the end results is well warranted, the choice of metric is very important, too. Businesses can succeed only when technology goals are linked to the business goals, and they finally crystallize as positive outcomes.

Contact centers are one of the most dynamic types of organizations that have been on a relentless hunt for automation solutions. They measure outputs with awe-inspiring precision and optimize their process to be more effective and cost-efficient.

Often, and fallaciously so, contact centers use containment rate as the most important metric when measuring the voicebot performance. In this article, we will demystify the limitations and dangers of using containment rate as an absolute measure of voicebot performance.

What is Containment Rate?

The containment rate is the percentage of users who interact with an automated service and leave without speaking to a live human agent.

When a customer ends a customer service interaction without the need to speak to a human agent, the call is said to be contained. While that may be great news in terms of resource optimization and better usage of human agent bandwidth, what does it really reveal about the customer’s experience? The containment rate does not reveal whether the customer’s query was resolved or if the customer was satisfied. Nor does it reveal anything about the effectiveness of your voicebot or even the IVR.

Why Containment Rate Goes Against the Principle of CX

If your goal as a company is to prevent your customers from reaching a human agent for support, then the containment rate is the best metric. But is that strategy reflective of your vision?

Ideally, in a world with no resource constraints, there would be a human agent ready to answer every customer’s call. But the cost factor proves to be prohibitive, resulting in the need to find a cost-effective and scalable means to improve CX. The technology deployed may range from mundane IVR to state-of-the-art Voice AI. But if the focus is just on increasing the containment rate, it will end up damaging CX.

Every call is an opportunity to forge a long-lasting relationship that can help a company improve its top and bottom line, over time.

What are Voice AI Agents or voicebots deployed for? It is to serve the customers better, provide zero wait-time and 24/7 support, and not prevent them from reaching human agents. The general idea is to promote self-service, yes, but if a customer wants to interact with the company, closing that door is not an ideal way to achieve customer satisfaction.

Hence, the containment rate must be seen in the context of other metrics while deciding if the performance of a voicebot is improving or not. Here are the situations where containment rates can be a misguided yardstick:

- Increasing Containment Rates: If seen in isolation, this can seem like an improvement. But customers may be ending the calls because the Automated Speech Recognition (ASR) engine is not recognizing their voice or words. It can also be that the conversation flows are not optimized, leading to customer frustration.

There are several other situations where customer queries are not resolved and causing them to hang up. Here, the containment rate may rise, but at the cost of CX. - Decreasing Containment Rates – Scenario 1: Calls can be classified into two categories: Completely successful calls, or partially successful calls. Many times, a voicebot is able to answer customer queries, and collect information, but for further complex questions or disputes, customers may ask for a human agent. Containment rates may decrease in these cases, but CX will improve. This is because the voicebot eliminated any waiting time for customers, it answered basic questions. The collected data and conversation helped the human agent quickly resolve customer queries; all culminating in improved CX. If we look only at the containment rate, we might assume that the voicebot has performed poorly and can result in bad business decisions.

Decreasing Containment Rate – Scenario 2: Every Voice AI Agent is trained for certain use cases and that is what makes them more effective than any other horizontal AI solution. In a case where the Voice AI Agent is handling all the calls but is trained for limited use cases, the containment rates may vary depending upon the volume of in-scope and out-of-scope calls. Hence, the generic or overall containment rate would be a wrong measure of voicebot performance.

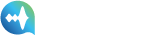

The 10 Most Ideal Voicebot Performance Metrics

All the discussion here surrounds inbound calls. Here are the metrics people must use to measure voicebot performance.

Yet again, it must be emphasized that no metric must be studied in a vacuum. Only when put together, the true picture will emerge. But here are some performance metrics that make the most sense:

Business-related metrics: KPIs that focus on business impact and Voice AI objectives.

- Service Level:

It is defined as the percentage of calls answered within a predefined amount of time. It can be measured over 30 minutes, 1 hour, 1 day, or 1 week. Also, it can be measured for each agent, team, department, or company as a whole.

A 90/30 Service Level objective means that the goal is to answer 90% of calls in 30 seconds or less.

Service Level is intimately tied to customer service quality and the overall performance of a call center. Thus, instead of containment rate, Service Level is a better measure of measuring performance and can facilitate key decisions better. Deployment of a voicebot must immediately jump up the service levels and thus create business benefits.

- First Call Resolution Rate (FCRR)

A call is marked resolved when the voicebot grasps the users’ query and has done everything right to assist them, even if it means connecting them with a human agent and the issue getting resolved in the first call itself. FCRR is an important metric as it helps to understand whether the voicebot is performing correctly for the use cases it is designed for and how well it is escalating the call.

Though a relatively marginal case for inbound calls, high FCRR will impact the cost of customer acquisition (CAC) and retention for obvious reasons. Instant call pickup, intelligent conversation, answering a customer query, and any follow-on questions can reduce the time lapse between customer query and purchase.

Also, higher FCRR goes a long way in increasing and maintaining customer retention. Higher FCRR is also necessary to navigate higher Costs per Call.

- In-Scope Call Success Rate

Though contact centers can measure the overall success rate, a better metric would be the Inscope success rate. At any given moment, a voicebot may be trained for a limited set of use cases. For example, a Voice AI Agent might be equipped to handle PNR queries or schedule maintenance visits, but when a call goes beyond this scope, it should pass on the call to a human agent. Hence, true success can only be measured if only in-scope calls are considered to calculate the success rate.

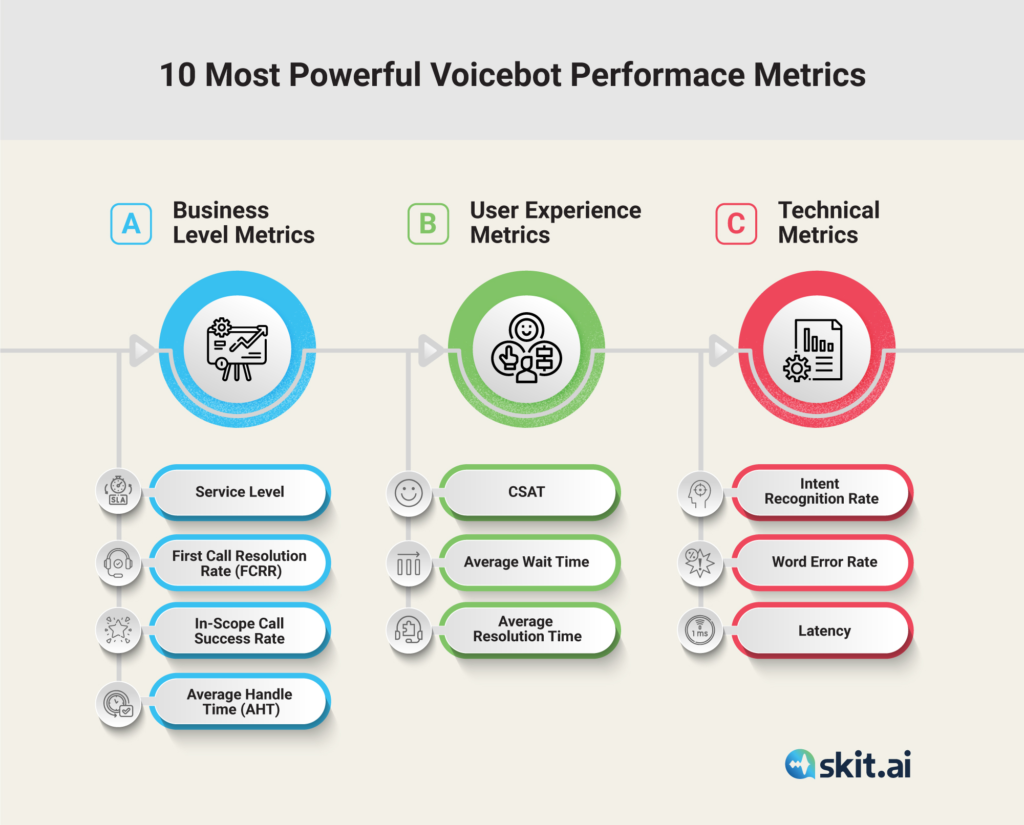

- Average Handle Time (AHT) – In-scope Agent Transfer AHT and End-to-end Automation AHT

To understand better, let’s compare the AHT in the two scenarios where a Voicebot must create value.

- AHT Comparison for End-to-end Automation – For a specific set of use cases the voicebot is designed to answer every query without the need for a human agent. The average call handling time AHT 1, as shown in the graph above, can be compared with a similar use case answered by a human agent.

It must be noted here that typically the cost per call per minute of a voicebot is quite lower, 1/7th (though inherently subjective), of the same cost of engaging a human agent. Hence, even if the voicebot takes the same amount of time to resolve the query, business gains are 7 folds.

- AHT Comparison for Escalated Calls: Interestingly, AHT can be compared even when the call is forwarded to a human agent by the voicebot. This is because the voicebot captures essential data such as – it verifies the identity of the callers, captures their intent, and forwards the call to the human agent so that he/she can pick up the conversation from the last point.

If the AHT of an escalated call is lower than the call answered by a human agent, then it means that even for out-of-scope calls, the voicebot is creating value.

If the voicebot is escalating the calls for use cases it is trained for, it needs improvement. If it is escalating calls out-of-scope, then it is functioning perfectly well, and this information can still be used for broader decision-making.

Scenario: Agent Transfer After Resolution Due to Dispute or Second Query Many times atypical conditions arise when the customer just wants to speak with an agent, ex. when an insurance claim is rejected, the customer invariably wanted to speak with a human agent to vent out their agitation. Voicebot is not at all responsible when the call escalates to a live agent in such cases, and hence such situations must not be considered when assessing the performance of the voicebot, the situation warrants human agent intervention.

Such deep analysis is only possible when such metrics are considered to evaluate voicebot performance and business gains.

User Experience Metrics: Companies must focus on CX that is useful, engaging, and enjoyable; creating a positive image that leads to product purchases, referrals, repeat purchases, and loyalty.

5. CSAT

Finally, the moment of truth, the CSAT score. It is a result of the overall performance of the voicebot. It is a good measure because ultimately, everything is futile if the voicebot doesn’t move the needle on CSAT scores. You can have a high containment rate to boast about, but if your corresponding CSAT scores are falling, your business performance will suffer significantly.

6. Average Wait Time

A company has to take a decision, it can route every call via the Voice AI agent, and this will bring down the average wait time to zero. Wait times have a serious and direct bearing on CX. One single-shot way of engaging the customer without making them wait or having them get further frustrated with IVRs is by deploying the Digital voice agent at every call.

7. Average Resolution Time

Once the customer is through and is speaking with the agent (human or voice AI) the time it takes to resolve the call matters a lot for consumers. This number must be looked at when CX is a priority.

Technical Metrics: Ensure the conversational AI product works and adheres to the requirements for performance or latency.

8. Intent Recognition Rate – Most important voicebot performance metric, and refers to the accuracy with which the voicebot is able to capture the intent of the speaker. This is important because a voicebot can only troubleshoot when it is able to capture the intent accurately.

9. Word Error Rate: The accuracy with which the ASR can recognize the words. Lower does not mean the outcomes will be inferior if intent recognition is high. But, the higher the accuracy the better.

10. Latency: Latency is a delay in response, and unlike chatbots, voicebots need to be pretty quick and agile in their response else they risk losing the customer’s attention and being pigeonholed as ineffective. Typically a Chabot latency is the sum of latencies of = ASR + SLU + FSM + TTS

Typically the total latency of 1-2 seconds is good, though, the lower the better.

Embrace Metrics that Truly Measure Intelligent Conversations

Abandon call containment rate as an absolute reflection of voicebot performance. Yes, it holds value but it is not true to the purpose of creating a voicebot.

Measuring and monitoring the right metrics will help you capture precise voicebot performance and thus enable you to improve it. Only then will it result in cost and CSAT advantages that the voicebot has been deployed for.

To learn more about voice automation and how to measure and improve performance, you can book a demo using the chat tool below.